Operational Excellence

AI vs. Manual Bottleneck Detection

Bottlenecks slow down processes and cost businesses up to 30% in throughput. Whether you're dealing with inefficiencies, delays, or rising costs, identifying bottlenecks is critical to improving performance. But how should you approach this? You have two main options: manual detection or AI-driven detection.

Manual detection relies on observation, process mapping, and tracking metrics. It's cost-effective for small setups but struggles with large, complex systems. Human error, scalability issues, and the inability to keep up with shifting bottlenecks are common drawbacks.

AI detection uses tools like machine learning and real-time data analysis to pinpoint and predict bottlenecks. It excels in speed, accuracy, and scalability but requires a strong digital infrastructure and upfront investment.

Key takeaway: AI is best for complex, high-volume workflows, while manual methods suit simpler, low-cost environments. For the best results, many businesses combine both approaches, blending AI's precision with human judgment for a balanced solution.

What Is Process Mining? AI to Find Bottlenecks Fast ⚙️🤖

Manual Bottleneck Detection Methods

Manual bottleneck detection relies on human observation to spot areas where processes slow down. While these methods have been around for decades, they remain practical and effective, especially when applied by experienced professionals.

Direct Observation and Process Mapping

One of the simplest ways to detect bottlenecks is through the Bottleneck Walk, often called a Gemba Walk. This involves physically walking through your workflow or production line to observe how tasks move through the system and where they stall.

During these walks, you’re looking for two key signs: a starved process (waiting for materials, indicating an upstream bottleneck) or a blocked process (unable to move forward, signaling a downstream bottleneck). Christoph Roser, a Professor of Production Management, explains:

Whenever the process is waiting for something else, it cannot be the bottleneck. Even more, if we know the process is not the bottleneck, we can say in which direction the bottleneck is.

Even tiny delays - like a pause of 0.25 seconds between tasks - can signal a bottleneck. These micro-delays might be hard for automated systems to catch but are noticeable during manual observation. A helpful technique is the "arrows method": mark each station with an arrow pointing to the suspected bottleneck. Where the arrows converge, you’ll find the constraint.

Inventory levels also provide visual clues. For example:

Buffers less than 1/3 full may indicate an upstream bottleneck.

Buffers more than 2/3 full suggest a downstream bottleneck.

This approach works even without formal capacity limits, as workers can often intuitively judge whether inventory feels "too full" or "too empty".

For a more structured view, try Value-Stream Mapping (VSM). This technique maps every process step, distinguishing between value-adding activities (what customers care about) and waste (like redundant approvals or excessive handoffs). By visualizing the entire flow, you can pinpoint where work accumulates and identify constraints.

While visual tools are powerful, combining them with performance metrics can sharpen your analysis.

Analyzing Key Metrics

Manual detection also involves tracking key performance metrics to complement observations. Metrics like cycle time (time per step), throughput (output rates), and resource utilization (capacity usage) can reveal bottlenecks.

In many cases, manual monitoring relies on simple tools like paper logs or verbal reporting. Workers record idle times between steps, note queue lengths, and track backlogs. Comparing a step's capacity to its output often highlights the process with the lowest throughput - the bottleneck.

A practical strategy is to rank bottlenecks by their impact. If you identify multiple constraints, prioritize them based on how much they affect overall throughput or revenue. This ensures you focus on the areas that bring the most benefit. Pay close attention to handoff points, where work transitions between team members, as these spots are common sources of delays and errors.

Interestingly, about 30% of bottlenecks occur in less obvious areas like logistics or manual data reconciliation. This is where human intuition plays a critical role, helping spot constraints that automated systems might overlook.

AI-Driven Bottleneck Detection Methods

Traditional methods of identifying bottlenecks often rely on human observation, but AI-driven techniques take a different approach. By using digital event logs and real-time data, these methods create a detailed map of actual process flows. They track every transaction, handoff, and delay, offering an objective view that often challenges assumptions. Unlike manual approaches, AI methods provide scalability and instant feedback, making them a powerful complement to human efforts.

Process Mining and Real-Time Data Analysis

AI tools use event logs to uncover the real workflow, highlighting unofficial steps, workarounds, and hidden processes that manual observation might miss. Instead of relying on static diagrams or interviews, process mining dives into thousands of transactions to reveal how work truly flows. As Adnan Masood, PhD, puts it:

Process mining turns event data... into factual maps of how work actually flows. Unlike interviews, workshops, or static BPMN diagrams, it is objective, scalable, and interactive.

One of the standout benefits of AI is its ability to differentiate between activity time (the time spent working on a task) and wait time (the time work sits idle). Manual methods often blur this distinction, leading teams to focus on the wrong fixes, like training or automation, when the actual problem lies in queue management. Real-time analysis allows for immediate adjustments to workflows, bypassing the lengthy manual review process. For instance, in an insurance setting, AI-driven process intelligence reduced claim cycle times from 8.3 days to 3.8 days - an impressive 26% improvement.

These insights also set the stage for machine learning to predict and prevent future bottlenecks.

Machine Learning and Predictive Analytics

Machine learning takes bottleneck management a step further by moving from reactive solutions to proactive predictions. By analyzing massive amounts of shop floor data, ML models can uncover patterns and correlations that might escape human detection. Techniques like "Bottleneck Mining" apply sensitivity analysis to system throughput, pinpointing bottlenecks based on their overall impact.

Predictive analytics use historical data to forecast where bottlenecks are likely to form, allowing teams to intervene before issues arise. This is especially useful in addressing "shiftiness", where bottlenecks move due to changes in process variability, product mix, or previous adjustments. Anders Skoogh, a professor in production systems, explains:

Throughput bottlenecks are machines which constrain throughput. They may occur in a system due to variability in the time duration of production flow disturbances, such as unplanned stops.

Studies show that real-world production systems often experience throughput losses of 20% to 30%. In one simulation for incentive compensation processing, increasing headcount - a typical manual solution - improved results by just 12%. In contrast, AI-driven automation achieved over a 70% improvement. AI's ability to analyze systems with hundreds of machines operating simultaneously makes it indispensable for tackling the complexities of modern production environments.

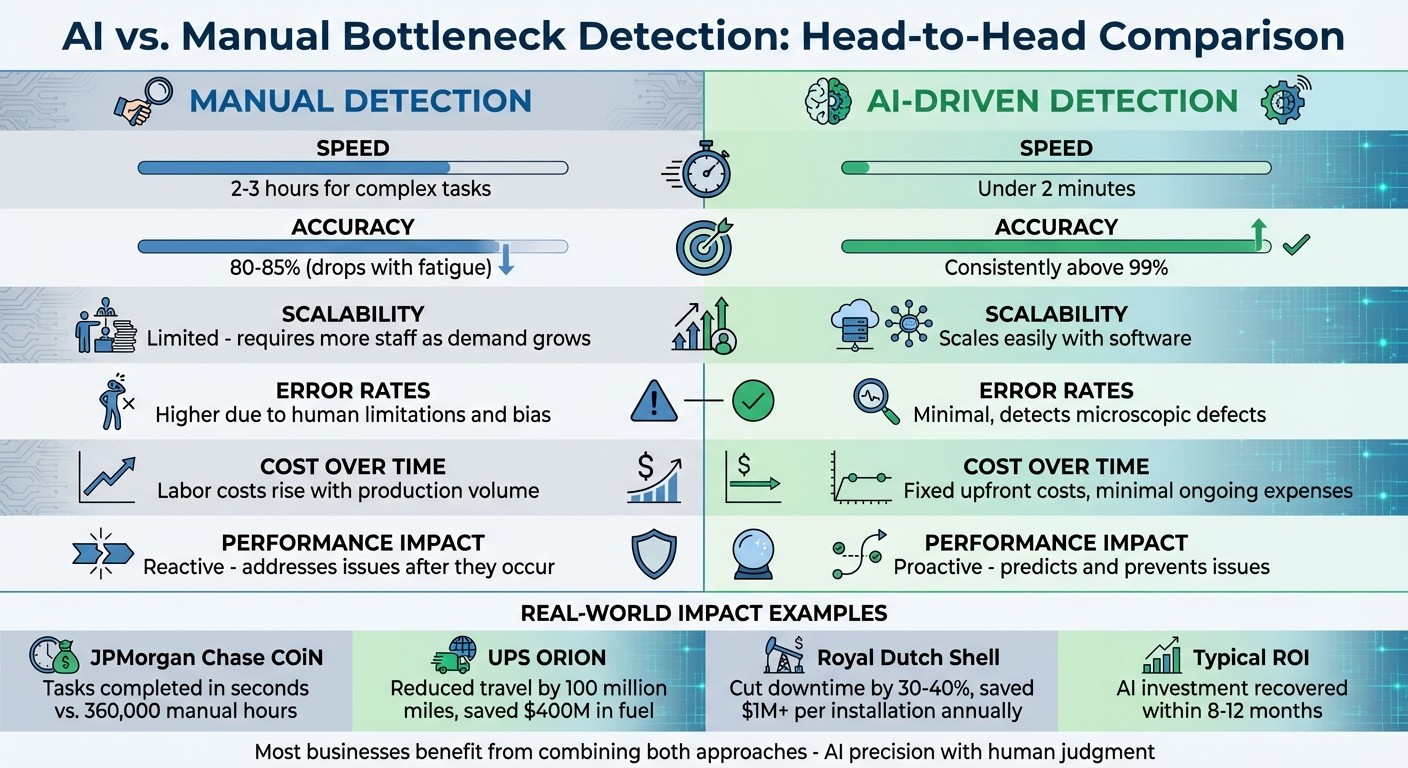

AI vs. Manual Detection: Side-by-Side Comparison

AI vs Manual Bottleneck Detection: Speed, Accuracy, and Cost Comparison

Comparison Table

Here’s a quick look at how AI-driven detection stacks up against manual methods:

Factor | Manual Detection | AI-Driven Detection |

|---|---|---|

Speed | Takes 2–3 hours for complex tasks | Completes tasks in under 2 minutes |

Accuracy | ~80–85%, but drops with fatigue | Consistently above 99% |

Scalability | Limited - requires more staff as demand grows | Scales easily with software |

Error Rates | Higher due to human limitations and bias | Minimal, even detecting microscopic defects |

Cost Over Time | Labor costs rise with production volume | Fixed upfront costs and minimal ongoing expenses |

Performance Impact | Reactive - addresses issues after they occur | Proactive - predicts and prevents issues |

This table lays the groundwork for understanding the practical implications of each method.

Analysis of the Comparison

The comparison reveals a clear distinction between the two approaches. Manual detection offers adaptability and contextual awareness, making it suitable for unique or unexpected situations. However, humans can only process 7±2 items at a time, limiting their efficiency. AI, on the other hand, processes millions of data points in real-time, offering unmatched speed and consistency.

Cost Dynamics

When it comes to expenses, manual detection is tied to labor costs, which increase as production scales. For example, a three-shift operation employing 12 inspectors at US$45,000 each racks up approximately US$540,000 annually. By contrast, AI requires a one-time investment of around US$120,000, with operational costs remaining predictable. Most businesses recover their AI investment within 8–12 months.

Accuracy and Efficiency

Human accuracy is capped at 80–85%, often hindered by fatigue or environmental conditions. AI surpasses this with over 99% accuracy, making it a more reliable choice for quality control. As Andre, a business operations analyst, aptly notes:

Manual decision-making increasingly represents a competitive liability rather than an asset.

Real-World Impact

The benefits of AI are evident in real-world scenarios. JPMorgan Chase’s COiN system, for instance, completed tasks in seconds that previously required 360,000 manual hours. UPS’s ORION system reduced annual travel by 100 million miles, saving US$400 million in fuel costs. Similarly, Royal Dutch Shell’s predictive maintenance strategies cut downtime by 30–40%, saving over US$1 million per installation each year.

Even in manufacturing, the financial impact is striking. Reducing defect escapes by just 1% in a facility producing 1,000,000 units annually can translate to US$500,000 in direct savings. These examples highlight the transformative potential of AI in streamlining operations and cutting costs.

Manual Detection: Pros and Cons

Manual methods of bottleneck detection have their strengths and weaknesses, which determine how well they fit into different operational settings.

Advantages of Manual Detection

Manual detection offers some clear benefits, particularly for smaller setups or when quick fixes are needed. One major plus? The low upfront cost. Tools like the "5 Whys" don’t require fancy software, making them easy to implement for teams on a budget.

The hands-on approach is another big advantage. Frameworks such as 5S and Fishbone diagrams allow teams to visually and collaboratively tackle problems. This direct interaction not only helps resolve issues but also boosts team understanding and engagement. Plus, human insight plays a key role in adding context to patterns that automated systems might miss.

When it comes to contextual judgment, humans still have the upper hand. For instance, while automation is great at spotting trends, it can’t decide whether a process is unnecessarily complex or genuinely needed. Manual methods also work well for small, unique datasets or for exploratory reviews before committing to automation. In cases where the problem is straightforward and known, manual detection often proves to be the quickest solution.

That said, manual methods often falter when dealing with more intricate or dynamic systems.

Disadvantages of Manual Detection

Despite their practical benefits, manual methods come with significant limitations, especially in modern, fast-paced environments. Human error and bias, along with outdated manual data, can compromise the reliability of findings and slow down response times.

One of the biggest hurdles is scalability. While manual methods can handle simple production lines, they struggle with complex systems involving parallel workflows, closed loops, or rework processes. Instead of pinpointing the root cause, they often highlight symptoms like high work-in-progress levels or long queues. Additionally, manual methods may overlook hidden delays where work sits idle between processes - often the real source of bottlenecks.

Another critical issue is that bottlenecks are not static. In real-world production, constraints shift frequently due to changes in product mix, staffing, or other variables. Manual observation provides only a momentary snapshot, often becoming outdated before any action is taken.

These limitations underscore the importance of comparing manual methods with AI-driven approaches to find the best fit for optimizing operations.

AI Detection: Pros and Cons

AI-powered detection brings impressive capabilities to the table, but it’s not without its challenges. Understanding both sides is crucial for deciding how to incorporate these tools into modern production workflows. Let’s break down the key strengths and limitations of AI detection compared to manual methods.

Advantages of AI Detection

AI shines in areas where manual approaches often fall short. One standout feature is real-time monitoring, which works around the clock to spot issues as they arise, rather than waiting for periodic reviews to uncover problems. This constant oversight helps prevent minor issues from snowballing into expensive disruptions.

Another game-changer is AI’s predictive abilities, which go beyond simply analyzing past mistakes. AI can forecast potential workload spikes or delays, enabling teams to allocate resources more effectively. For example, an auto insurance company used automated data flows to cut cycle times from 8.3 days to 3.8 days - an impressive 26% improvement - without adding staff.

Scalability is another area where AI stands out. It can process data from hundreds of machines and evaluate complex factory systems simultaneously, tasks that would be too slow or costly for manual methods. AI also pinpoints the root causes of bottlenecks by distinguishing between activity time and wait time. One consulting firm used AI to analyze an incentive compensation process and found that fragmented data collection - not analyst bandwidth - was the real bottleneck. By automating data extraction and validation, they identified over 70% improvement potential in cycle time.

Additionally, AI’s simulation tools allow teams to test solutions virtually before implementing them. This reduces the risk of creating new problems while ensuring that changes deliver the desired outcomes.

Disadvantages of AI Detection

Despite its strengths, AI detection comes with some hurdles. High implementation costs can make it inaccessible for smaller businesses. Setting up a robust digital infrastructure and collecting 30 to 60 days of data before generating actionable insights can be a significant investment.

AI systems also depend heavily on high-quality data. If your event logs or shop floor data are incomplete or poorly integrated, the AI might miss critical issues. This reliance on clean, well-organized data can make it difficult for companies with less advanced digital systems to benefit fully from AI tools.

Another challenge is the ever-changing nature of bottlenecks. These constraints can shift due to process variability, product mix changes, or even adjustments made to fix previous issues. AI models must be adaptable to these changes, or they’ll require frequent manual updates, which adds to maintenance costs and complexity.

Finally, there’s the risk of contextual blindness. While AI excels at identifying patterns, it might overlook subtleties that human judgment can catch - like whether a process is unnecessarily complicated or genuinely essential. Studies show that production systems often experience throughput losses of 20% to 30%, but understanding the root causes of these losses often requires blending AI insights with human expertise.

How to Choose Between AI and Manual Detection

Factors to Consider

When deciding between AI and manual detection, practical considerations are key. For AI to be effective, your operations need to rely on digitized data. If your processes are built around paper forms or verbal communication, manual observation and interviews are likely your best bet.

AI shines in high-volume, complex workflows where it can process large datasets. On the other hand, simpler or low-volume tasks - like custom orders processed only a few times a month - lack the data volume needed for AI to provide meaningful insights. In environments with sprawling, interconnected systems, AI can map out intricate workflows, uncovering hidden inefficiencies like unofficial workarounds or redundant steps that aren't documented. However, if your processes involve minimal handoffs, the investment in AI might not pay off.

Timing is another critical factor. AI offers 24/7 real-time monitoring and alerts when performance dips, whereas manual methods rely on periodic reviews, such as monthly check-ins or quarterly audits, which are inherently reactive.

Budget constraints also play a major role. AI requires upfront investment in digital infrastructure and a data collection period of 30 to 60 days before producing actionable insights. For smaller operations with limited resources, manual methods might be a more accessible starting point, even if they don’t provide the same depth of analysis.

"The bottleneck determines your throughput. Everything else is secondary." - Skan.ai

AI is particularly adept at identifying bottlenecks like wait times - work sitting idle in queues. Meanwhile, manual methods are better suited to spotting activity-related issues, such as inefficient procedures or gaps in employee training.

Given these factors, a combined approach often yields the best results, blending the strengths of AI and human analysis to tackle bottlenecks effectively.

Combining AI and Manual Methods

The most effective strategy often involves integrating AI with manual oversight. A great example comes from Avalign Technologies, a medical device manufacturer that adopted the MachineMetrics platform in November 2025 across four U.S. facilities. Under OEE Director Matt Townsend, they combined real-time machine data with human expertise, leading to a 25% to 30% boost in Overall Equipment Effectiveness and millions of dollars in added capacity - all without investing in new equipment.

This hybrid approach works because AI and manual methods complement each other. AI excels at data collection and pattern recognition, offering unbiased, real-time insights. Meanwhile, manual expertise is invaluable for root cause analysis, using tools like the "5 Whys" or fishbone diagrams to uncover why a bottleneck exists.

While management often speculates on root causes, AI can pinpoint the actual bottlenecks by automating data reconciliation. This allows staff to focus on core tasks, leading to significant improvements in cycle times.

Simulation tools act as a bridge between AI insights and manual decision-making. Before making changes like reassigning staff or rearranging equipment, AI simulations can model the potential impact, avoiding the risk of "bottleneck shifting", where solving one issue creates another downstream. Once AI identifies the problem, humans validate its findings against on-the-ground realities and make informed decisions about next steps.

"Manual production monitoring is fraught with human errors such as missing data, transposed numbers, and bias. It's also time-lagged, and data is often outdated by the time a problem is identified." - MachineMetrics

To get started, deploy AI or process mining tools for 30 to 60 days to establish a baseline of how your workflows operate. AI can pinpoint where the bottleneck is, while manual investigation determines why it’s happening - whether due to training gaps, equipment failures, or design flaws. This division of labor ensures both accuracy and efficiency, all while keeping costs manageable.

Rebel Force's Approach to Bottleneck Detection

How Rebel Force Combines AI and Human Expertise

Rebel Force takes a balanced approach by blending AI technology with human expertise to tackle operational blockages effectively. Their four-phase process merges advanced AI diagnostics with the nuanced understanding of human teams, addressing both technical challenges and organisational hurdles. This partnership ensures that no critical detail is missed - something that fully automated systems might struggle to achieve.

Here’s how it works:

Diagnose Phase: AI tools perform process mining to map out real workflows, capturing every step, handoff, and period of waiting.

Design Phase: AI creates Digital Twins and runs simulations to test potential solutions before they are rolled out.

Execute Phase: Human teams collaborate with your staff to implement these solutions effectively.

Validate Phase: Performance metrics track the results, ensuring measurable ROI.

This collaborative approach doesn’t just identify issues; it turns insights into action. By combining AI’s speed and precision with human adaptability, Rebel Force helps reduce cycle times, minimise errors, and ensure smoother operations across the board.

Delivering Measurable Results

Rebel Force's method stands out by clearly distinguishing between activity time and wait time. AI-driven analysis uncovers hidden bottlenecks like fragmented systems, unseen data-wrangling tasks, and repetitive rework cycles - issues that traditional methods often miss. This shift from reactive problem-solving to predictive optimisation allows businesses to stay ahead of potential challenges.

Whether opting for an Enablement Sprint (a focused 12-week program) or a 12-month Enablement Program, the goal remains the same: measurable improvements. By leveraging data-driven insights, Rebel Force ensures scalable process enhancements that deliver consistent ROI across your operations.

Conclusion

Deciding between AI-driven and manual bottleneck detection isn’t an either-or situation - combining both methods often delivers the best results. Manual techniques rely on observation and historical data, offering straightforward and cost-effective solutions in stable environments. However, they can fall short due to their reactive nature and the potential for human error. In contrast, AI-driven detection excels in real-time monitoring, predicting surges, and uncovering hidden constraints across multiple machines. The caveat? AI demands a solid digital infrastructure and high-quality data to work effectively. As Dave Westrom from MachineMetrics points out, manual production monitoring is often plagued by errors and delays.

Given these complementary strengths and weaknesses, a hybrid approach proves highly effective. By merging AI’s speed and predictive power with human judgment, businesses can identify and address bottlenecks more efficiently.

Rebel Force exemplifies this with their four-phase process, which integrates AI diagnostics and human expertise. This approach shifts operations from reactive problem-solving to proactive optimization, driving tangible improvements in efficiency and capacity. Whether you’re tackling a single bottleneck or managing a complex system, the right detection strategy hinges on your specific operational needs, the quality of your data, and your timeline.

In production systems, bottlenecks typically account for throughput losses of 20% to 30%. Addressing these constraints - whether through AI, manual methods, or a combination - can significantly enhance performance. This blend of AI and manual techniques reflects the evolving strategies for overcoming bottlenecks, as explored throughout this discussion.

FAQs

How do I know if AI is worth the upfront cost for my process?

When deciding if AI is a smart financial move, it’s crucial to weigh the potential return on investment (ROI), long-term savings, and efficiency improvements it can bring. Studies show that AI has the potential to cut operational costs by up to 28%, while also enhancing accuracy and making processes scalable.

Think about the upfront costs in relation to benefits like fewer errors and faster processing times. For example, AI can streamline repetitive tasks, allowing teams to focus on more critical work. Tools like process mining are especially helpful - they can uncover hidden opportunities for ROI, ensuring AI is solving real problems instead of being implemented just for the sake of it.

Ultimately, evaluating these factors can help determine whether AI is a valuable addition to your operations.

What data is needed for AI to reliably detect bottlenecks?

AI thrives on detailed, high-quality data to effectively identify bottlenecks. This data should cover workflow processes, resource usage, performance metrics, and real-time operational insights. For AI to provide meaningful analysis and support decision-making, maintaining both accuracy and completeness in these data points is absolutely essential.

How do I stop bottlenecks from shifting after I fix one?

Bottlenecks tend to shift because of system variability and the ever-changing dynamics within processes. To stay ahead of these changes, it's crucial to keep a close eye on performance. Tools like real-time analytics can provide the insights needed to tackle these challenges head-on.

Taking a broad perspective is also key. Regular process reviews and advanced techniques - such as digital twins or process mining - can uncover the root causes behind these shifting constraints. By addressing these issues effectively, you can ensure bottlenecks are managed in a way that prevents recurring problems.