AI Enablement

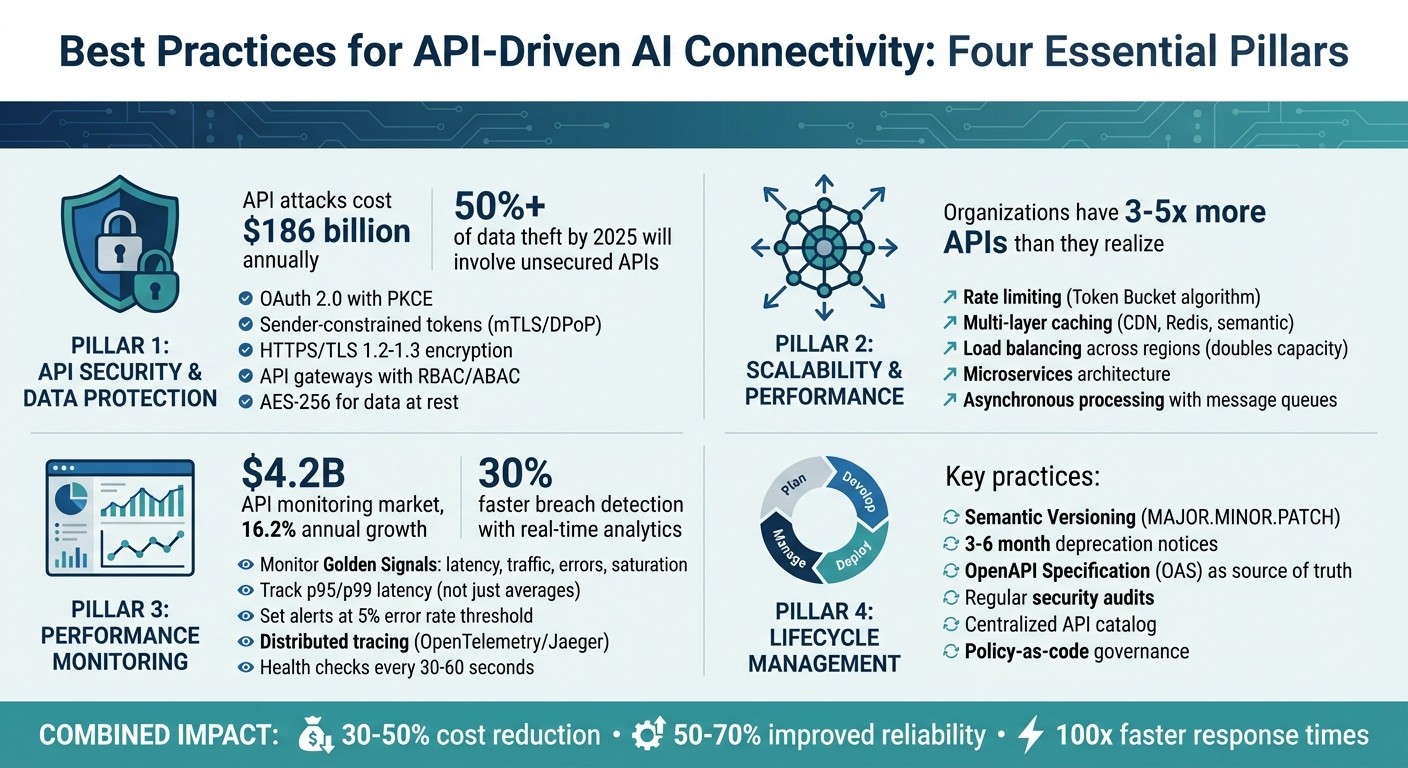

Best Practices for API-Driven AI Connectivity

APIs are the backbone of AI systems, enabling seamless communication and data exchange across platforms. But with AI workloads growing exponentially, managing API security, scalability, and performance has become more challenging than ever. Here’s a quick breakdown of the key strategies to tackle these issues:

Security: Use OAuth 2.0, sender-constrained tokens, HTTPS/TLS encryption, and API gateways to protect sensitive data and prevent attacks.

Scalability: Implement rate limiting, caching, and load balancing to handle high traffic and reduce costs.

Performance Monitoring: Track latency, errors, and traffic in real-time to detect anomalies and maintain reliability.

Lifecycle Management: Use versioning, regular audits, and structured deprecation policies to keep APIs functional and secure.

These practices ensure that API-driven AI systems remain secure, efficient, and reliable, even under heavy workloads.

Four Pillars of API-Driven AI Connectivity Best Practices

From Zero to AI: Building Smarter Apps with AI API Integrations

API Security and Data Protection

The first pillar of this checklist zeroes in on protecting API connections - a cornerstone for dependable AI integration. Keeping AI-driven APIs secure involves a layered approach to counter both traditional threats and emerging risks. It's a critical issue, as API-related attacks cost businesses around $186 billion annually, and by 2025, over 50% of data theft is expected to involve unsecured APIs. For AI systems, a single vulnerable endpoint could expose sensitive training data, making robust API security essential. Here's a closer look at some effective strategies.

Authentication and Authorization Methods

Strong authentication and authorization practices are non-negotiable. For instance, OAuth 2.0 with PKCE is a practical safeguard against authorization code injection and cross-site request forgery attacks. The industry is phasing out older, less secure grants like the Implicit Grant and Resource Owner Password Credentials Grant due to their susceptibility to token theft. For AI systems managing sensitive tasks, sender-constrained tokens (via mTLS or DPoP) are a smart choice, as they tie tokens to specific senders, rendering stolen tokens useless.

Token management also plays a pivotal role. Use opaque tokens for external or third-party APIs to maintain privacy, while JSON Web Tokens (JWTs) are better suited for internal service-to-service communication, thanks to their claim-based logic. To ensure tokens are used appropriately, implement audience restrictions via the aud claim and enforce scope-based access, limiting tokens to specific servers and actions. As Michał Trojanowski from Curity points out:

"Just because your API security isn't breached doesn't mean that everything is fine. You should gather metrics and log usage of your API to catch any unwanted behavior".

Data Encryption Standards

Encryption is another cornerstone of API security. Start with HTTPS secured by TLS 1.2 or 1.3, which safeguards credentials and ensures data integrity during transit. Regularly updating security certificates is crucial to avoid vulnerabilities. For privileged services or machine-to-machine communication, mTLS provides mutual authentication, verifying both client and server identities. Strengthen this further with HTTP Strict Transport Security (HSTS) headers, which enforce encrypted HTTPS connections and guard against protocol downgrade attacks.

When handling highly sensitive data, application-layer encryption like Javascript Object Signing and Encryption (JOSE) can secure JSON/REST payloads. Additionally, encrypt data at rest using standards such as AES-256, especially in caches, logs, and backend systems. Avoid self-signed certificates (except in testing environments), and rely on centralized OAuth servers with JSON Web Key Sets (JWKS) for seamless signing key distribution and rotation.

API Gateways for Security Control

API gateways act as a centralized hub for managing security. They integrate authentication, authorization, and rate limiting into a unified control system. As MuleSoft explains:

"The API gateway acts as a deterministic security layer. By intermediating agent-to-agent and agent-to-system interactions, the gateway protects the enterprise and its data".

Modern gateways now feature AI-specific protections, such as defenses against prompt injection and the ability to mask personally identifiable information (PII). They also enforce fine-grained authorization using Role-Based Access Control (RBAC) or Attribute-Based Access Control (ABAC), limiting access based on roles or contextual factors.

Gateways enhance security further through schema validation and input sanitization, which block malicious payloads like SQL injection or cross-site scripting before they reach the AI's core logic. Traffic and rate-limiting policies help prevent abuse, such as DDoS attacks, by controlling request volumes based on API keys, IP reputation, or Service Level Agreements. With organizations often underestimating their API inventory - having 3-5 times more APIs than they realize - gateways also help manage "shadow APIs", reducing hidden vulnerabilities.

Scalability and Performance Optimization

To keep API-driven AI workflows running efficiently even under heavy loads, scalability is key. After securing APIs, the next step is to design infrastructure that can handle dynamic AI workloads. Unlike traditional systems, AI APIs face unique scaling challenges due to multi-dimensional limits like Requests per Minute, Tokens per Minute, and Requests per Day. Here’s how to manage traffic, allocate resources effectively, and design APIs that can scale.

Rate Limiting and Throttling Controls

Rate limiting is essential to prevent system overload, but it needs to be adaptive and multi-layered. Combine strategies like client backoff, server quotas, and abuse detection to create a robust system. For instance, OpenAI enforces a limit of 60,000 requests per minute, broken down into 1,000 requests per second. Your rate-limiting mechanisms should account for such short enforcement windows.

The Token Bucket algorithm is a great way to allow short-term traffic bursts while maintaining steady average limits. In distributed systems, tools like Redis can centralize request tracking in real time, ensuring consistent enforcement across servers. Instead of a one-size-fits-all approach, apply granular limits based on factors like user roles, subscription tiers, or specific API endpoints.

To help clients self-regulate, use standard headers such as X-RateLimit-Limit, X-RateLimit-Remaining, and Retry-After. Additionally, implement exponential backoff with random jitter (varying delays between 75–125%) to prevent synchronized retries. Without proper backoff, a single misconfigured client could trigger a retry storm, potentially racking up costs of US$15,000 in just 48 hours.

Caching and Load Balancing Techniques

Pair rate limiting with caching and load balancing to tackle performance bottlenecks. Caching can significantly reduce the strain on expensive AI models by reusing responses. Use a multi-layer caching strategy: HTTP Cache-Control headers for client-side caching, edge caching via CDNs, and server-side caching with tools like Redis or Memcached. Semantic caching, which reuses responses for similar queries, can also cut down on costly model calls.

Load balancing spreads incoming traffic across multiple API instances, preventing any single server from becoming overwhelmed. For APIs with regional quota limits, splitting traffic across regions can double capacity - for example, from 1,440 RPM in one region to 2,880 RPM across two. A spillover strategy can further optimize performance by routing traffic to pre-paid throughput instances first, then directing excess traffic to pay-as-you-go endpoints during demand spikes. To make horizontal load balancing effective, ensure your APIs are stateless, so any server instance can handle incoming requests.

Grouping API resources into backend pools and using circuit breakers can also help. Circuit breakers prevent repeated calls to failing or overloaded services, keeping the system stable. As Juliane Franze from Microsoft highlights:

"Adding an API Gateway in front of your AI endpoints is a best practice to add functionality without increasing the complexity of your application code".

Scalable API Architecture Design

Scalable APIs start with smart architectural choices that can adapt to changing workloads. A microservices architecture is ideal - it breaks APIs into smaller, independent services, letting you scale high-demand AI inference services separately while isolating faults.

For resource-intensive AI tasks, asynchronous processing is a game-changer. Use message queues like RabbitMQ or Kafka to offload heavy tasks to background jobs. Pair this with an event-driven architecture, where APIs react to events instead of constantly polling for updates. This approach improves resource usage and enables real-time updates.

Cloud-native tools and Kubernetes can manage resources dynamically, scaling AI components up or down based on demand. Prioritize horizontal scaling to ensure redundancy and fault tolerance. When dealing with large AI-generated datasets, switch to cursor-based pagination instead of offset-based methods to maintain performance as data grows.

API Performance Monitoring and Management

Once you've scaled your API infrastructure, keeping it running smoothly becomes a top priority. This is where real-time performance monitoring comes in. In 2023, the global API monitoring market was valued at about $4.2 billion, with projections showing a growth rate of 16.2% annually through 2030. Clearly, this is a vital area to focus on.

Real-Time Monitoring and Alerts

To effectively monitor your APIs, focus on the "Golden Signals" of performance: latency, traffic, errors, and saturation (resource usage). Average response times can be misleading, so take a closer look at metrics like p95 and p99 latency. For example, while the average response time might be 100 milliseconds, 1% of users could still experience delays of up to 2 seconds [37,42].

Pairing synthetic monitoring (which simulates user activity) with real user monitoring gives you both predictable benchmarks and insights into actual user experiences. API gateways like Apache APISIX and Kong are great tools for collecting metrics across microservices [37,38]. To avoid drowning in irrelevant alerts, use SLO burn rates and set thresholds over short rolling windows (e.g., 5-15 minutes). Also, confirm alerts from multiple geographic locations before escalating issues [42,43]. Companies using real-time analytics report detecting security breaches 30% faster.

These methods allow teams to quickly spot anomalies and conduct regular health checks to ensure everything’s running as expected.

Detecting and Fixing Anomalies

When monitoring, watch out for red flags like latency spikes, error surges (e.g., 4xx or 5xx responses), unusual traffic patterns hinting at DDoS attacks, or unexpected authentication failures [40,46]. Tools like Apigee's Advanced API Operations, which use machine learning, can quickly spot these patterns and provide dashboards for further investigation.

For deeper analysis, distributed tracing tools like OpenTelemetry or Jaeger are essential. They let you track a single request across multiple microservices, pinpointing where bottlenecks or failures occur [40,42]. Adding correlation IDs to your requests can also help trace issues back to their source. Many high-performing teams set alerts when error rates exceed 5%, as this often indicates a major upstream problem.

It’s also important to monitor for silent failures. A "200 OK" response doesn’t guarantee the data is accurate. Regularly validate response structures and business logic to catch these issues.

As Martin Norato Auer, VP of CX Observability Services at SAP, explains:

"We get Catchpoint alerts within seconds when a site is down. And we can, within three minutes, identify exactly where the issue is coming from and inform our customers and work with them".

While automated tools handle urgent issues, periodic health checks help maintain long-term reliability.

Scheduled API Health Checks

Real-time monitoring is great for catching immediate problems, but scheduled health checks ensure your API stays reliable over time. For high-traffic systems, these checks should run every 30 to 60 seconds, while lower-traffic systems might only need checks every 5 minutes [48,49,50].

Health checks should verify that:

Endpoints are reachable.

Critical endpoints respond in under 200 milliseconds.

Backend dependencies (like databases or third-party services) are functioning.

Responses meet expected data integrity standards [48,49,50].

Instead of a simple "healthy/unhealthy" status, design health check endpoints to return structured JSON with details for each module (e.g., "authentication: healthy" or "routing: degraded") [48,50]. Rotating checks across different regions can also help identify location-specific issues with CDNs or routing [49,45].

Integrating these health checks into your CI/CD pipeline ensures stability before and after deployments [48,45]. In fact, about 70% of developers report that data-driven insights from monitoring tools lead to faster and more effective resolutions of major performance issues. These practices are especially important for verifying the integrity of complex AI workloads that rely on API connectivity.

API Lifecycle Management

Managing APIs effectively goes beyond just ensuring they are secure and scalable - it’s about maintaining control from the initial design phase all the way to retirement. This approach ensures APIs remain reliable and perform consistently over time. Without a clear management strategy, organizations risk dealing with shadow APIs, outdated versions that linger indefinitely, and documentation that no longer reflects the current production environment.

Versioning and Documentation Standards

A strong versioning strategy is key to avoiding disruptions in AI integrations caused by breaking changes. One widely used approach is Semantic Versioning (SemVer), which follows the MAJOR.MINOR.PATCH format. Here’s how it works:

MAJOR: Incremented for breaking changes (e.g., changing a field's data type from String to Integer).

MINOR: Updated when adding new features, such as optional fields.

PATCH: Used for bug fixes.

For public APIs, path-based versioning (e.g., /v1/resource) is a practical choice since it works well with caching mechanisms. Another option, header-based versioning (e.g., X-API-Version), keeps URLs stable but may require more advanced caching strategies. Facebook, for instance, enforces a strict two-year lifecycle for major versions of its Graph API, while Google suggests a shorter timeline of around 180 days for transitioning from beta to stable versions.

As Beck aptly puts it:

"Breaking an API is cheap; rebuilding trust with partners and product teams is expensive".

To avoid accidental breaking changes, integrate tools like oasdiff or Spectral into your CI/CD pipeline. These tools can automatically detect issues before they reach production. Using the OpenAPI Specification (OAS) as the authoritative source for your API ensures consistency and aligns expectations for API consumers.

Audits and Maintenance Schedules

Once versioning is in place, regular audits become essential for maintaining security and functionality throughout the API’s lifecycle. Start by creating a centralized catalog that tracks critical details like each API’s owner, data classification, environment, and lifecycle status. This helps prevent undocumented endpoints and ensures better oversight.

Security audits should confirm that all endpoints meet modern standards, such as enforcing TLS 1.2+, implementing OAuth2/OIDC authentication, and including appropriate security headers. To streamline governance, adopt a policy-as-code approach. For example, integrate OpenAPI linting into your CI/CD pipeline to enforce adherence to your organization’s style guide.

Monitoring runtime performance is equally important. Key metrics to track include:

P95 latency: How fast your API performs under typical conditions.

Error rates: Identifying recurring issues.

Availability: Ensuring uptime meets your Service Level Objectives (SLOs).

Analyzing traffic patterns can help identify unused endpoints and track the usage of deprecated versions, making it easier to plan for their eventual removal. As Phil Sturgeon from Treblle explains:

"API governance is about applying policy, process, and tooling across the entire API lifecycle, not a one-time checklist".

Additionally, setting an error budget tied to your SLOs ensures that reliability improvements are prioritized when performance falls short.

Retiring Deprecated APIs

When it’s time to phase out an API, clear communication and careful timing are critical. Typically, deprecation notices are issued 3 to 6 months in advance, while major version retirements may require 12 to 18 months of notice. Use HTTP headers like Deprecation and Sunset to programmatically inform clients about upcoming changes.

Before fully retiring an API, audit its usage through API gateway logs. This helps identify shadow clients or high-usage consumers who may still rely on older versions. Running old and new versions side by side for a transitional period allows these users time to migrate. A structured sunset policy should include:

Announcement: Inform clients about the deprecation.

Soft freeze: Halt new feature development.

Read-only period: Allow access but restrict updates.

Complete shutdown: Fully retire the API.

This phased approach ensures a smoother transition for all stakeholders.

Conclusion

Summary of Best Practices

Creating dependable API-driven AI connectivity requires a thoughtful approach that balances security, performance, and cost management. Key security measures include using OAuth 2.0, JWT, and granular access controls like RBAC/ABAC. Encrypting data through HTTPS/TLS and implementing backend proxy patterns also prevents sensitive API keys from being exposed in frontend code.

For performance, strategies like intelligent routing and caching are essential. Choosing the right models based on task complexity can cut token costs by 30%–50%. Techniques such as semantic caching, load balancing, and circuit breakers not only improve system reliability but can also lower costs by 50%–70% while enhancing response times up to 100×.

Maintaining a strong API lifecycle is equally important. This includes proper versioning, regular audits, and proactive inventory tracking to quickly identify vulnerabilities and address performance issues.

When these practices are combined, they turn AI systems from costly experiments into efficient, reliable tools that deliver real business value. This framework reflects the strategic expertise Rebel Force brings to API-driven AI integration.

How Rebel Force Supports API-Driven AI Integration

Rebel Force builds on these proven strategies to provide customised solutions for seamless API-driven AI connectivity. Their expertise lies in creating data-focused systems that help organisations adopt these best practices for measurable returns. Using a structured four-phase process - Diagnose, Design, Execute, and Validate - they collaborate with teams to pinpoint connectivity challenges, design scalable architectures, and deploy solutions that deliver impactful results.

Their approach tackles the unique complexities of AI systems, employing methods like semantic caching, intelligent model routing, and extensive monitoring. Whether through focused 12-week Enablement Sprints for quick progress or year-long Enablement Programs for deeper transformation, Rebel Force ensures your API-driven AI systems are built to support sustainable growth. They prioritise security, performance, and cost efficiency, making sure your AI investments align with long-term business goals.

FAQs

How do I secure AI APIs beyond basic OAuth 2.0?

To go beyond the basics of OAuth 2.0, consider implementing advanced security practices for your AI APIs. Start with identity-based access control to ensure only verified users or systems can access your API. Combine this with a zero-trust architecture, which continuously verifies every access request, regardless of its origin.

Layered security is key. Use multi-factor authentication to add extra protection, and manage tokens carefully to prevent misuse. Incorporating real-time anomaly detection can help you spot unusual behavior instantly, reducing the risk of breaches.

Another important step is maintaining visibility into AI-generated requests. This means closely monitoring how APIs are being used and identifying any unusual patterns. Pair this with adaptive validation measures - these adjust based on the context of each interaction, helping to handle unpredictable API behavior effectively.

By combining these strategies, you can build a robust defense system that keeps your AI APIs secure in an ever-evolving threat landscape.

What’s the best way to rate-limit AI APIs by tokens and requests?

To manage AI API usage effectively, you can implement token quotas to regulate the number of tokens processed by each user or application within a specific timeframe. Additionally, set request rate limits based on factors like user, IP address, or API key to keep traffic under control. To handle errors smoothly, incorporate exponential backoff and retry mechanisms.

For enforcement and monitoring, consider using API management tools or developing custom middleware. These solutions can help ensure limits are adhered to while tracking usage effectively.

What monitoring metrics identify AI API issues fastest?

When it comes to catching AI API problems early, a few key metrics can make all the difference:

Latency (p95): This measures how long it takes for your API to respond. Keeping an eye on the 95th percentile ensures you're tracking the slower responses, which can signal performance hiccups.

Error Rates: A spike in errors - like 4xx or 5xx status codes - can be a red flag for underlying issues.

Success Rates: A drop in successful responses might indicate a problem that needs immediate attention.

Real-time tracking of these metrics, especially response times and error codes, is crucial. It allows for quicker detection of issues, leading to faster fixes and a more reliable system overall.