AI Enablement

Modular Architecture in AI-Driven Enablement Systems

Modular AI systems are changing how businesses use artificial intelligence. Instead of relying on large, single-purpose systems, organizations are now building smaller, specialized modules that work together. This approach improves efficiency, reduces costs, and makes systems easier to maintain.

Key Takeaways:

Independent Modules: Each module handles a specific task (e.g., data retrieval, sentiment analysis) and communicates via APIs.

Cost Savings: Companies report 30% lower costs and 25% faster project timelines compared to older systems.

Scalability: Modules can scale individually, avoiding the need to over-provision resources.

Maintenance: Issues are isolated to specific components, simplifying updates and troubleshooting.

Real-World Use: Examples like Microsoft’s MASAI and ORCHESTRA show how modular systems outperform traditional designs in specialized tasks.

This shift mirrors the move from monolithic software to microservices, offering businesses a better way to build and manage AI systems. Modular architecture is transforming not just AI but also how companies approach problem-solving and ROI measurement.

Build Modular AI Workflows That Evolve Automatically | Matthias Moore | Agentic AI for Enterprises

Key Building Blocks of Modular AI Architectures

To grasp modular AI systems, it's essential to see how distinct components come together to form a cohesive whole. Each part has a specific role, ensuring the system remains adaptable and dependable. Together, these elements enable scalable and efficient performance across the AI infrastructure.

Machine learning model registries serve as the central hub for managing versioned AI models. These registries handle version control, store critical metadata (like model_name, artifact_uri, git_commit), and oversee transitions between stages such as Candidate, Staging, and Production. By linking artifacts to specific code commits and training data, they ensure deployments are both reproducible and traceable.

Data pipelines transform raw data into features and labels, forming the backbone of AI workflows. Separating feature engineering into an independent process eliminates discrepancies between training and production environments. This modular setup guarantees consistent data flows for both model training and real-time inference. A shared storage layer ties these pipelines together, replacing fragmented and improvised data infrastructures.

Microservices and APIs are critical for ensuring modular systems remain organised and efficient. Instead of relying on a single, monolithic application, functionality is broken into smaller, containerized services, each handling a specific task. Using standardized APIs, such as REST or GraphQL, these services can communicate seamlessly while maintaining independent development cycles. Companies adopting this approach have reported 30% faster deployment times and a 20% reduction in operational costs compared to traditional architectures.

Inference engines are tasked with turning features and trained models into real-time predictions. These engines operate independently, scaling to meet demand rather than following static training schedules. This ensures rapid, sub-second responses. When demand spikes, individual modules can scale up without requiring the entire system to over-provision, keeping resources efficient and costs manageable.

Business Benefits of Modular Architecture

Scalability and Flexibility

Modular AI systems are designed to grow horizontally, allowing businesses to add or replace specific components rather than overhauling an entire infrastructure. This means when a particular service faces increased demand, companies can focus resources on that specific area, streamlining deployments and reducing operational expenses.

The modular approach also makes it easier to upgrade individual tools or models without disrupting the overall workflow [3, 17]. For example, businesses can use large models for strategic decision-making while assigning repetitive, high-volume tasks to smaller, more cost-efficient models. This method helps manage costs effectively as usage grows, unlike traditional monolithic systems that often require extensive resources to scale.

Easier Maintenance and Upgrades

Another key advantage is the simplicity modular design brings to system maintenance. Failures can be pinpointed to specific components - whether it’s in routing, retrieval, or synthesis - making troubleshooting more focused. George Strong of Instill AI highlights:

"modular systems are easier to maintain because updates can be applied to individual components without disrupting the entire platform".

AI21 Labs echoes this sentiment:

"when a failure can be localized to a specific component, remediation can be targeted to that component rather than requiring retraining or rescaling of the entire system".

This targeted approach reduces the technical debt that often comes with monolithic systems. With clearly defined roles for each module, testing and verification are straightforward. Developers can quickly address underperforming components or replace them with updated versions, avoiding the need for a complete system overhaul.

Faster ROI

The benefits of easier maintenance also translate into quicker returns on investment. Modular architecture speeds up time-to-value by enabling teams to develop different modules simultaneously. Pre-built components further simplify validation processes and allow businesses to track ROI at the module level [5, 19]. As Jens Eriksvik from Algorithma puts it:

"Enterprises don't win by owning the biggest model, they win by having the platform where new agents can show up, plug in, and start delivering value by lunchtime".

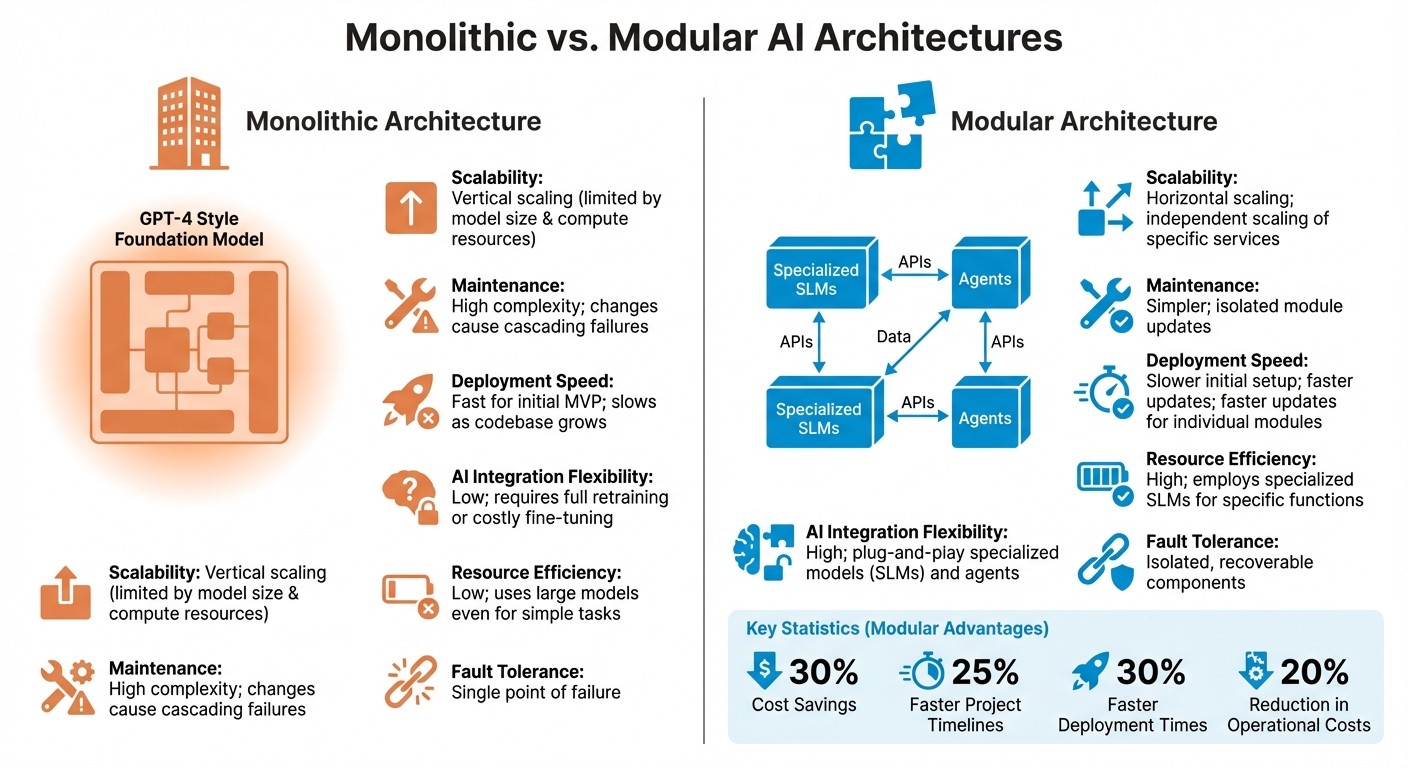

Monolithic vs. Modular Architectures

Monolithic vs Modular AI Architecture Comparison

When comparing monolithic and modular architectures, it's clear why modular systems are gaining traction in AI development. Monolithic architectures integrate data ingestion, model training, and inference into a single codebase. They often depend on large, centralized foundation models like GPT-4 for reasoning and execution, which can create bottlenecks and limit overall performance [3,17].

On the other hand, modular architectures take a more flexible approach by breaking functionality into smaller, specialized components that communicate through APIs. This design, often referred to as a "Compound AI System" (CAIS), combines multiple models, retrievers, and databases. The result? Better performance and adaptability compared to relying on a single foundation model.

Scalability: Two Paths

The way these systems scale is one of their most striking differences. Monolithic systems rely on vertical scaling, meaning they require increasingly powerful and expensive hardware to handle larger workloads. Modular systems, however, scale horizontally. This allows organizations to add or replace specific components - like sentiment analyzers or chatbots - without affecting the entire system. This flexibility makes modular setups more adaptable and cost-effective over time [3,6].

Security and Fault Tolerance

Security and fault tolerance also set these architectures apart. Monolithic systems are vulnerable to single points of failure, where one compromised or malfunctioning component can jeopardize the entire system. Modular systems mitigate this risk with "security sandboxing", isolating failures within specific modules to prevent widespread issues. As Enrico Piovesan, a Platform Architect, highlights:

"Our systems must become more modular because our code is becoming more modular. AI doesn't write 10,000-line services. It writes atomic functions, narrow tasks, and discrete capabilities".

Key Differences at a Glance

The table below summarizes the main distinctions between monolithic and modular architectures:

Feature | Monolithic Architecture | Modular Architecture |

|---|---|---|

Scalability | Vertical; limited by model size and compute resources | Horizontal; independent scaling of specific services or agents |

Maintenance | High complexity; changes can cause cascading failures | Simpler; isolated module updates |

Deployment Speed | Fast for initial MVP; slows as codebase grows | Slower initial setup; faster updates for individual modules |

AI Integration Flexibility | Low; requires full retraining or costly fine-tuning | High; "plug-and-play" specialized models (SLMs) and agents |

Resource Efficiency | Low; uses large models even for simple tasks | High; employs specialized SLMs for specific functions |

Fault Tolerance | Single point of failure | Isolated, recoverable components |

This comparison highlights why modular systems are becoming the preferred choice for organizations aiming to build scalable, secure, and efficient AI ecosystems.

Recent Research on Modular AI Systems

Recent studies are shedding light on how modular AI architectures are driving advancements in business systems. By breaking AI systems into specialised components, researchers have found that these architectures can tackle complex challenges more efficiently than traditional, monolithic designs. The benefits aren't just theoretical - there's growing evidence of measurable results in real-world applications.

Microservices in AI Agents

One standout example is the ORCHESTRA architecture, which uses microservices to create structured, AI-driven workflows. This system employs a Dockerized Manager to direct tasks to specialised workers, each handling functions like speech recognition, emotion analysis, or content retrieval. Researchers have noted:

"Industry stakeholders are willing to incorporate AI systems in their pipelines, therefore they want agentic flexibility without losing the guaranties and auditability of fixed pipelines".

In early 2026, ORCHESTRA was successfully deployed as an interactive museum guide. It managed multimodal inputs - speech, text, and images - while maintaining policy-compliant responses through built-in safety mechanisms.

A different approach comes from Microsoft Research India with MASAI (Modular Architecture for Software-engineering AI Agents). MASAI breaks down complex coding issues into five specialised sub-agents: Test Template Generator, Issue Reproducer, Edit Localizer, Fixer, and Ranker. This modular design achieved a 28.33% resolution rate on the SWE-bench Lite dataset, far surpassing the 4.33% rate of standard RAG baselines. A standout performance came from the Edit Localizer sub-agent, which achieved a 75% localisation rate at the file level, compared to 61% for monolithic systems like SWE-agent. The average cost per issue was approximately $1.96.

These results highlight the practical advantages of modular systems, particularly in addressing domain-specific challenges.

Domain-Specific Models

The growing focus on domain-specific models within modular frameworks is tackling a key issue: general-purpose AI often falls short when addressing specialised business needs. Microsoft Research's Akshay Nambi explains:

"ATLAS enables small language models to effectively operate in large-scale tool environments through reinforcement fine-tuning that learns context control and execution structure".

The ATLAS Framework addresses a common problem in long-running workflows - context saturation. By employing "incremental tool loading", smaller language models can manage large toolspaces without overwhelming their context windows. This approach reduces computational strain and allows specialised models to focus on specific tasks, avoiding the inefficiencies tied to massive foundation models.

The MASAI team at Microsoft Research India also emphasised the importance of this modular approach:

"As the problem complexity increases, it becomes difficult to devise a single, over-arching strategy that works across the board".

By sidestepping unnecessarily long processing paths, modular systems not only cut down on inference costs but also maintain performance levels by avoiding irrelevant context.

These advancements naturally lead to the development of communication standards among AI modules, as explored in Model Context Protocols.

Model Context Protocols

The Model Context Protocol (MCP) is setting new standards for how AI components interact. It treats plugins as independent network services that communicate via JSON-RPC over transports like stdio, Server-Sent Events, or WebSockets. This design ensures that any malfunction is confined to the affected module, limiting broader system disruptions.

MCP defines three main content types: Tools (executable code for tasks like API calls), Resources (data or documentation), and Prompts (templates for workflows). Its capability negotiation feature allows systems to adapt to older or limited server versions, ensuring backward compatibility.

In February 2026, the Radix network introduced "radix-context", a collection of 19 curated reference documents for AI coding agents. These documents, aligned with the agents.md standard, enable tools like Claude and Cursor to auto-discover and utilise deep-reference material for Scrypto code generation.

For security, MicroVMs like Firecracker are being used to isolate high-risk third-party AI plugins. These MicroVMs are highly efficient, with boot times of about 125 milliseconds and memory usage of just ~5MB per VM. This level of isolation helps contain security risks, preventing them from spreading across the system.

These findings underline how modular designs are paving the way for scalable, efficient, and secure AI solutions tailored to business operations.

Rebel Force's Modular Enablement Methodology

Rebel Force takes the principles of modular systems and applies them to streamline and improve business operations. Their approach focuses on pinpointing and addressing the "dominant constraint" - the specific bottleneck that disrupts organizational flow. Instead of jumping straight into tools or strategies, they begin every project with a careful diagnosis.

"Every engagement starts with diagnosis, not design. We identify the core constraint - the point where flow breaks - before touching tools, teams, or strategy."

– Rebel Force

With over a decade of experience and more than 220 successful processes under their belt, Rebel Force has achieved an average ROI of 70%. Their unique methodology involves assembling "Rebel Flow Units", which are cross-functional teams consisting of an Enablement Lead, AI/Data Specialist, Process Designer, Creative Technologist, and Performance Analyst. This diverse team structure ensures that technical implementation, process refinement, and performance tracking happen simultaneously. By doing so, they not only enhance AI capabilities but also deliver measurable business results.

The 4-Phase Process

Rebel Force’s projects follow a clear four-step process: Diagnose, Design, Execute, and Validate. Here’s how it works:

Diagnose: Data and behavioral insights are analyzed to identify the dominant constraint - the main bottleneck in the system.

Design: A tailored enablement blueprint is created to address the identified bottleneck.

Execute: Solutions are implemented in measurable sprints by the flow teams.

Validate: ROI is measured, lessons are documented, and preparations are made for the next cycle.

This structured process reflects the advantages of modular systems, allowing for targeted updates, independent scaling, and measurable outcomes at every phase.

Enablement Sprints vs. Enablement Programs

Rebel Force offers two engagement models based on this 4-phase framework, each designed to suit different business needs.

Enablement Sprints: These are 12-week cycles aimed at quickly removing the dominant constraint for immediate breakthroughs.

Enablement Programs: These are 12-month cycles focused on gradual, structural transformation at a steady pace.

Feature | Enablement Sprints | Enablement Programs |

|---|---|---|

Duration | 12 weeks | 12 months |

Focus | Immediate constraint removal | Long-term structural transformation |

Tempo | Intense, focused cycles | Gradual, distributed enablement |

Team Involvement | Rebel Flow Unit | Integrated with internal teams |

ROI Measurement | Defined ROI per sprint | Continuous ROI tracking over time |

Both models use fixed pricing, calculated based on flow, ROI, and the compound effect. In the Sprint model, each cycle has a specific ROI target, while the Program model spreads the investment over the entire year. This flexibility allows businesses to choose a pace that aligns with their transformation goals while ensuring measurable outcomes.

Conclusion

Modular architecture is changing the way businesses approach AI-driven systems. Instead of relying on large, single-purpose models that require enormous computing power and risk system-wide failures, companies are adopting smaller, specialised components. These modules can be scaled, maintained, and updated independently. The benefits are clear: companies using modular AI architectures report 30% cost savings, 25% faster project completion, 30% quicker deployment times, and 20% lower operational costs compared to traditional monolithic systems. This shift not only improves technical efficiency but also delivers measurable business advantages.

Experts compare this evolution to previous technological shifts:

"In the same way cloud computing moved from mainframes to microservices, AI is moving from monoliths to modularity".

This modular approach offers a strategic edge, enabling companies to adapt faster, reduce technical debt, and achieve a stronger return on investment.

Rebel Force exemplifies how modular principles drive transformation. With over 220 successful processes and an average ROI of 70%, their constraint-driven methodology proves that modular thinking can extend beyond software. Their 4-phase process - Diagnose, Design, Execute, Validate - aligns with modular system best practices. By identifying bottlenecks, crafting targeted solutions, implementing them in measurable steps, and independently validating results, they ensure a streamlined approach to problem-solving.

Through their 12-week Enablement Sprints or year-long Enablement Programs, Rebel Force creates systems that scale effectively, adapt swiftly, and deliver transparent, measurable outcomes. Their Rebel Flow Units - cross-functional teams with no silos - ensure that technical implementation, process design, and performance tracking happen simultaneously. This integrated approach drives meaningful operational transformation, going far beyond simple technical upgrades.

FAQs

When should we move from a monolithic AI system to modular?

Switch to a modular AI system when growing complexity, scalability, reliability, or shifting organizational requirements make relying on a single, large model impractical. Modular systems use specialized components to tackle specific tasks more efficiently, offering greater flexibility and improved performance as AI systems continue to advance.

What’s the minimum set of modules to start with?

To get started, you'll need two key modules: a specialized hunter agent and an insights creator. The hunter agent scans knowledge bases to identify connections, while the insights creator focuses on extracting high-value insights. These two components act as the backbone of a modular AI system, laying the groundwork for effective functionality and performance.

How is ROI measured for each AI module?

To determine the ROI of each AI module, its effects are evaluated across three key areas: component, repository, and commit levels. This method provides a clear, data-driven foundation for discussing ROI with executives and stakeholders.